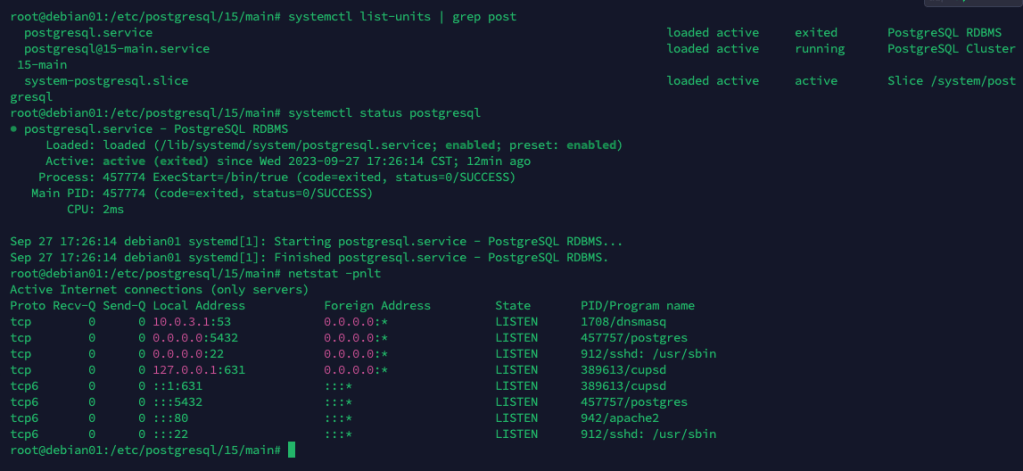

netstat has long been a useful tool for displaying network-related information on Linux systems. However, it has been deprecated in favor of newer tools like ss and ip, which provide similar network monitoring and management capabilities. These tools are considered more modern and offer better performance. Here are two of the primary tools that replace netstat on Linux:

ss(Socket Statistics):ssis a replacement fornetstatand is part of the iproute2 package. It is used to display detailed information about network sockets, interfaces, routing tables, and more.ssprovides a more efficient and flexible way to monitor and troubleshoot network connections. Some commonsscommands include:- Display all listening and non-listening sockets:

ss -a - List all TCP sockets:

ss -t - Show UDP sockets:

ss -u - Display established connections:

ss -t -a state established - Show listening ports:

ss -t -l

- Display all listening and non-listening sockets:

ip(iproute2): Theipcommand is a versatile utility for configuring and managing network interfaces, routes, and more. While it’s not a direct replacement fornetstat, it provides more comprehensive control over network configurations. Someipcommands include:- Show information about all network interfaces:

ip a - Display routing table information:bashCopy code

ip route - Show statistics for a specific interface (e.g., eth0):

ip -s link show dev eth0 - Add or modify network routes:

ip route add default via 192.168.1.1

- Show information about all network interfaces:

Both ss and ip offer improved performance and more detailed information than netstat. They are commonly available on modern Linux distributions. However, note that they may require superuser (root) privileges or the use of sudo to access some information. You can refer to their respective man pages (man ss and man ip) for detailed usage and command options.

While these tools have largely replaced netstat for modern Linux systems, it’s worth mentioning that some Linux distributions might still include netstat for compatibility reasons. However, using ss and ip is recommended for more up-to-date and accurate network-related information and configurations.